The Permission Layer

. . . or how AI doesn’t ruin your life.

Cameron wrote recently about the coming machine layer of the internet, and ever since our Go Restive event, I have been thinking about an analogous concept: a deterministic permission layer on top of AIs. I expect that our future agents will be sandwiched between two abstractions: the machine layer that Cameron discusses, which will allow for efficient data exchange with other systems, and a deterministic barrier between the AI and real-world actions.

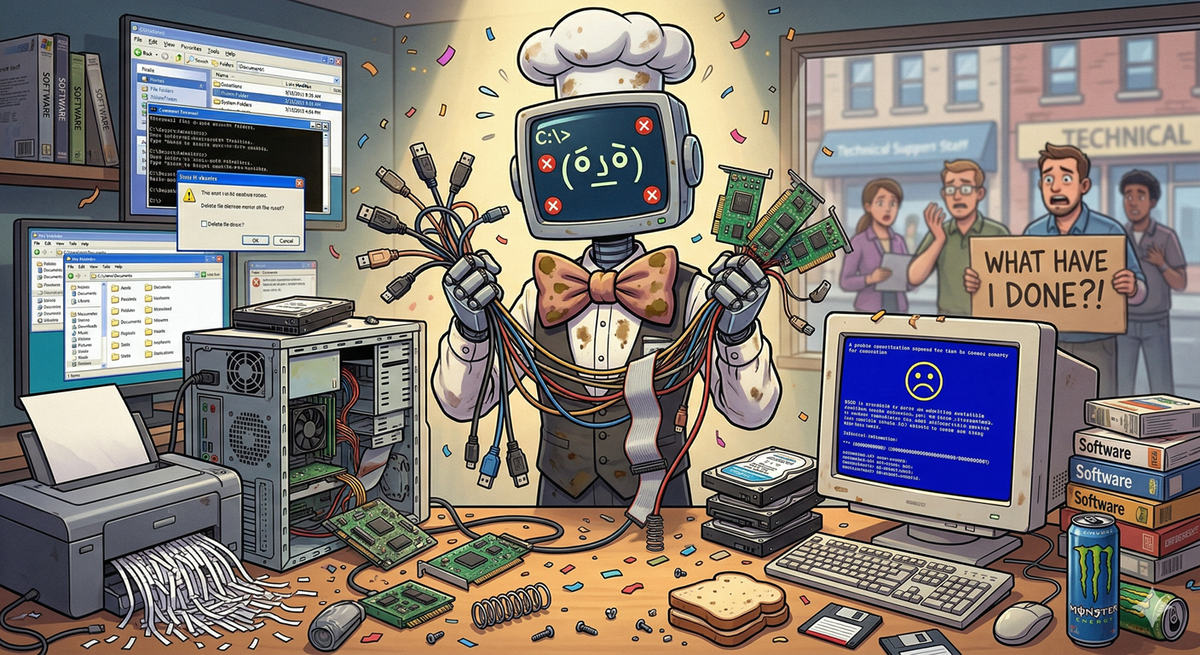

Historically, computers have been wholly deterministic—to almost an infuriating degree. Anyone who has shouted “YOU KNOW WHAT I MEANT” at their hapless PC or dealt with a complier error from a missing semicolon knows the feeling: it’s obvious what you meant, but computers don’t have any flexibility.

Until now. LLMs are flexible, not rigid. They are inherently stochastic.[1] Moreover, they sometimes make things up or “forget” instructions, in ways not entirely dissimilar from humans. I’ve been enjoying my Adventures with OpenClaw, but I’m on Team Elon here when it comes to the insanity of just installing it on your main system.[2] Even with safeguards and VMs, it is hard to predict exactly how things will go wrong, and the blast radius can be intense.

Without this layer, agents will remain a niche product for techies and early-adopters

Any instruction given to an LLM is inherently “soft.” It’s just another bit of text that gets fed into the maw and statistically alters the output. “Never send an email without telling me” can be compacted away or simply overridden by a subsequent request to “politely decline all sales pitches for random SaaS products.”

There is also no effective path to create these barriers within the agents themselves. As an example, OpenClaw recently nerfed the product by making all new installations “message-only.” The default configuration file now denies the model access to most tools except the messaging channel, so it becomes a chatbot, not an agent. (Post-founder-hire, OpenAI likely wants to ensure that this free product won’t compete with an upcoming paid mass-marketed agent.)

Getting it back to a useful state isn’t particularly difficult; you just need to delete a few lines in a config file. It’s intentionally undocumented, though, and a little bit obscure; the kind of thing that’s easy for developers but hard for mainstream users. It makes sense from a business perspective. Amusingly, though, there’s another way to solve this problem: ask OpenClaw to change its config file so that it can be useful again. It’ll happily just edit its own permission file for you. This is very funny, but it also provides a good illustration of how different these agents are from traditional programs.

If I want to ensure, say, that my agent can only draft—but not send—emails on my behalf, it is not enough simply to tell it that. It isn’t even enough to edit the script it uses to interact with the Gmail API, because it can just change the script on its own (and it likely would, in an attempt to be helpful). There are real solutions, but none is trivial to implement.[3]

Building this permission layer is the next major opportunity in agentic systems. There are many individual implementations: Cursor has allowlists and the like to help prevent models from wreaking havoc on dev machines; and traditional APIs using OAuth-style frameworks very often separate things like write and read permissions. What is needed, though, are more granular and more general approaches: a push notification every time an agent wants to access a payment token; a two-man-key approval for sending wires; a combination of a smart contract and human review to release an escrow payment.

Critically, these layers need to exist outside of the LLMs. Anthropic can’t just ship “Opus 4.7, now with permissions!” The model itself needs to be constrained by a software layer outside of it, in exactly the same way that we have restricted accounts and permissions for human users.

These approval layers (there can be many, of course) need to be effective, not get in the way, be highly customizable, and also consider odd edge cases. They also need to be very secure: the best LLMs are very good at working around constraints and pursuing their goals; they’ll bully through obstacles very impressively. Put another way, this problem is hard. Without this layer, agents will remain a niche product for techies and early-adopters and struggle to go mainstream, especially with an adversarial media anxiously waiting to amplify every misstep.

Already, there are companies in our portfolio (e.g. Crossmint, Natural and Flowglad) building these kinds of tools. If you are thinking about this problem, please get in touch. We would love to talk to you.

Even if you turn the temperature to zero, you’ll still get a degree of randomness because of how parallel computation works. This has always been true, but the intense recursiveness of LLMs means that the cumulative errors add up to create real-world consequences. ↩︎

Although a part of me definitely respects the audacity of it. It’s like how I feel about base jumping. ↩︎

I eventually settled on a script owned by another user with sole access to the API token, to which my agent can pass arguments via connecting to a unix socket owned by that other user and writable by the agent. I have no idea if this is the most elegant way to do it, but it does keep a reasonable security model intact with traditional Unix-style account boundaries. Obviously, a truly malicious agent could figure out some way to fuzz my script or execute some privilege escalation attack on the OS—nothing is perfect. ↩︎